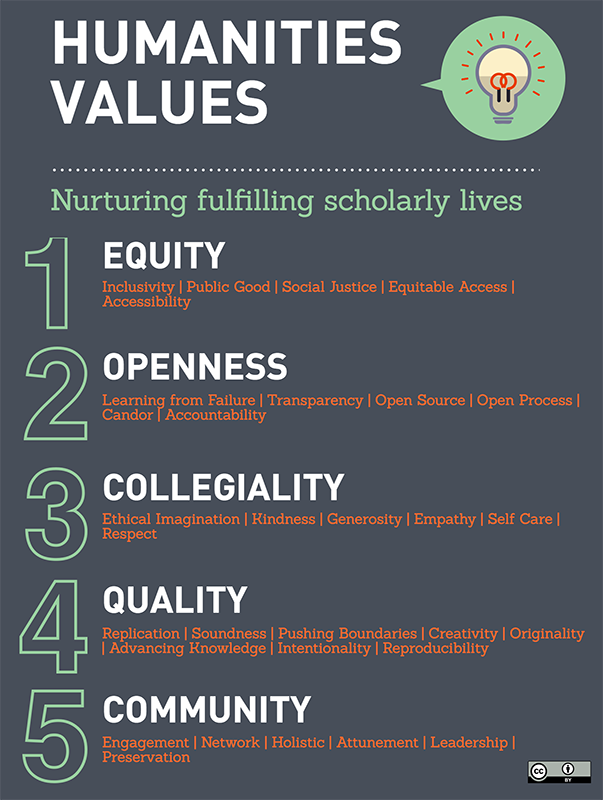

When we first introduced the work of the HuMetrics group to the other TriangleSCI teams last October, we met with some resistance. Much like peer groups in the run-up to an election, we had become used to having our own ideas reflected and amplified internally. Four days of intensive brainstorming had inoculated us to the controversial and slightly scary nature of much of what we’re trying to do, and when we chose to present an extreme example of the potential application of the HuMetrics framework ("What if we took a values-based approach to assessing the quality of academic mentoring?") we — understandably, in retrospect — got significant pushback. What we thought we were proposing was a way to recognize and reward the often hidden labor of peer and student mentoring by paying attention to the ways in which mentoring can embody not only the core values we propose (equity, openness, collegiality, quality, and community) but also many of the values we had grouped under those core values — transparency, empathy, accountability, candor, engagement, and respect. What our audience heard us propose, however, was something altogether different: a neoliberal performance metrics that would measure and assess the quality of time and interpersonal interactions tied to HR and promotion and tenure decisions.

What this made us realize was that while we had brainstormed in constant cognizance of our aim — "nurturing fulfilling scholarly lives" — other people were and would be coming to the project with very real concerns about the potential abuse of any kind of metrics in an increasingly quantified academy and would have very legitimate fears of the additional work it might take to interrogate the whole gamut of scholarly practices with an eye to ameliorating the lived experience of academe. To begin our discussion of values-based metrics with mentoring was to take things many steps too far, to move much too rapidly from an idea to the further reaches of its potential implementation. When, the following day, we demonstrated how one might apply our proposed values framework to a syllabus, the project’s decidedly un-neoliberal aims were understood and appreciated much more quickly.

It was a hard lesson, but an important one. We understand now that to bring about substantial culture change in scholarly practice, we need to start by demonstrating how one might use a values framework to interrogate the more tangible products of that practice: articles and monographs, sure, but also digital projects, syllabi, annotations, and peer review. How might academic culture change if we paid attention to equity, openness, collegiality, quality, and community in the process of creating these products as well as in the final products themselves?

During our time at TriangleSCI 2016, the HuMetrics team made a commitment to continue the work we began, and we have been fortunate enough to do so with the support of our respective institutions (without speaking for them of course). With a Social Science Research Council (SSRC) staff member on the team, however, we realized quickly that limiting our work to the humanities without taking the social sciences into account was, in a way, reinforcing an artificial and often institutionally imposed divide between two fields of research that have much more in common than not. Both humanists and social scientists face many of the same problems when it comes to showcasing the value of our work or in fighting to combat predatory publishing practices and corrosive academic behavior. By bringing the two fields together, we believe we’ll be able to make an even stronger case for a values-based framework on which to base assessments of excellence.

A schedule of regular biweekly brainstorming meetings, two in-person meetings at the SSRC offices in New York, and several intense bouts of co-writing and peer editing (minimally interrupted by transatlantic moves, promotions, presidential elections, and small and not-so-small children) have resulted in a close-knit team that’s excited to extend the HuMetricsHSS project beyond internal brainstorming and establish a proof of concept that might actually change things for the better.